Agentic AI is having its "apps in 2008" moment: everyone can build a demo fast, but getting to production — reliably, securely, and at scale — is where most experiments stall. This is a practical, code-first walkthrough of how Microsoft demonstrated at Microsoft Ignite 2025 how you can close that gap using Foundry Agent Service, the new Agents API built on OpenAI Responses, and the Foundry Control Plane for evaluation and operations.

What follows is a deep technical breakdown of the core architecture, the "dev → prod" transition model, how tools/memory/workflows work, and how to operationalize agents with evaluators + tracing.

You can review the full session here.

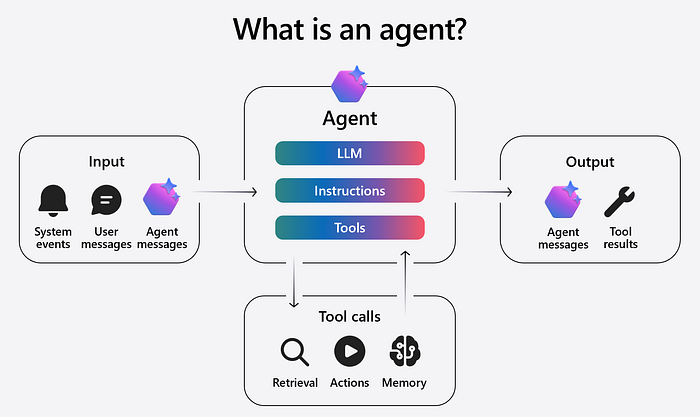

The core mental model: what an "agent" is in this talk

Simply put, an agent is a predictable unit of work that takes inputs (system events + user/agent messages), applies instructions + a model, and uses tools (retrieval, actions, memory) to produce outputs (agent messages + tool results).

A useful way to visualize it:

User/App

|

v

Agent Runtime (instructions + policy)

|

v

Model (reasoning)

|

+--> Tool calls (retrieval/actions/code)

| |

| v

| Tool results

|

v

Final response (+ artifacts/logs/traces)The important shift is that agents aren't "chatbots with prompts" — they're systems: tools, data boundaries, identity, evaluation, and observability become first-class engineering concerns.

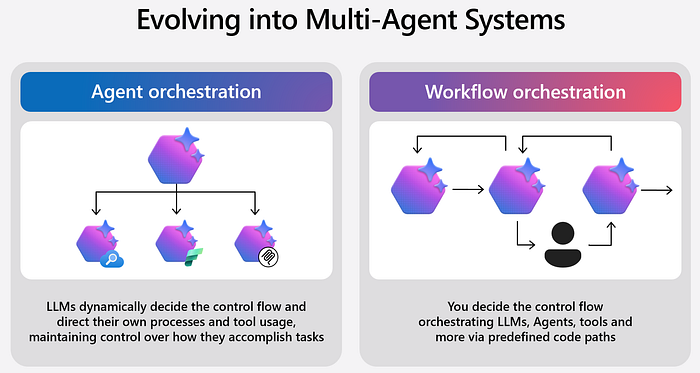

Two orchestration styles: "agent orchestration" vs "workflow orchestration."

That said, two ways multi-agent systems emerge:

- Agent orchestration: the LLM dynamically decides control flow (what to call, when, and in what order).

- Workflow orchestration: you define deterministic control paths (multi-step flows coordinating agents/tools).

This matters because production systems almost always need both:

- Dynamic reasoning where it's safe and valuable,

- Deterministic workflows where you need guarantees, auditability, or compliance.

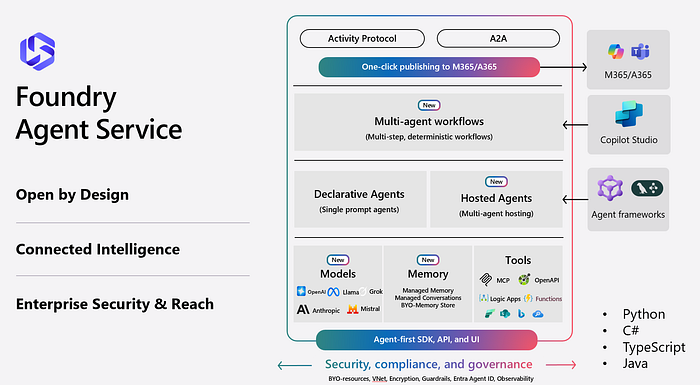

The platform strategy: Foundry Agent Service is "built on Responses."

A major point announced during Microsoft Ignite 2025 is that Foundry Agent Service is a unified agent platform built on the OpenAI Responses API.

The migration message is clear:

- "Responses code is already Agents code"

- Minimal changes to deploy and "unlock agent capabilities"

- Persisted agents add identity, observability, and memory

- …And they integrate with workflows/connectors.

That matches Microsoft's Agents API positioning: the Foundry Agents API is "based on Responses API" and introduces new agent types (Azure OpenAI agents, workflow agents, custom agents), with persisted conversations and agent artifacts.

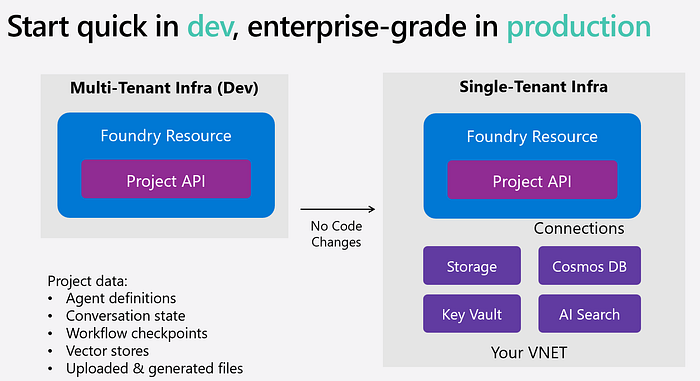

Why this matters: start quick in dev, be enterprise-grade in prod

The following image shows the productionization model:

- Dev: multi-tenant infra, Foundry Resource + Project API

- Prod: single-tenant infra with no code changes, plus your own Connections/Storage/Key Vault/Cosmos DB/AI Search, inside your VNet

- Project data includes agent definitions, conversation state, workflow checkpoints, vector stores, uploaded/generated files

Bottomline: prototype quickly, then move to production by "bringing your own storage… Cosmos DB, Key Vault, and AI Search… inside your own VNet" while centralizing governance in the Foundry Control Plane.

Architecturally, this is huge: it's a clean separation between:

- The Agent logic (code + prompts + tools)

- And enterprise control planes (identity, data boundaries, evaluation, monitoring)

Tools: the "tool catalog" is the real product surface

In Foundry, you can go to the Agent Builder and add tools — including parity with Responses tools (file search, web search, code interpreter) plus Microsoft-specific options like Azure AI Search, Fabric Data Agent, SharePoint, and "MCP connectors" plus OpenAPI/A2A custom tools.

This is where Foundry's tool ecosystem matters most in practice: the "agent" becomes a routing layer across capabilities that already exist in your enterprise stack.

Microsoft Learn (tools catalog concept): https://learn.microsoft.com/en-us/azure/ai-foundry/agents/concepts/tools-catalog?view=foundry&WT.mc_id=AZ-MVP-5000671

Now let's take a look at two scenarios.

Scenario 1: "keep your code" — run Responses-style code, then upgrade to agents

Scenario 1 is about starting with the exact same "Responses-style" tool-calling code you already have, and then deploying it on Azure AI Foundry Agent Service with minimal changes — so you "upgrade to agents" without rewriting your app logic.

What happens in the scenario:

- You write a Responses-style request that uses built-in tools (in the talk: things like file search and code interpreter) to answer questions grounded on files and optionally generate artifacts.

- You then swap the runtime/client setup so that the same request runs via Foundry Agent Service. The business logic stays the same — same prompt pattern, same tool calls.

- From there, you can run as an ephemeral agent for fast experimentation (including trying different models/providers), and later persist the agent so it has a managed identity/lifecycle in the platform (shows up in the portal, can be tested in playground, and becomes a governed asset).

In short: Scenario 1 is the "no-drama migration path" from Responses code → Foundry agents, letting you keep your implementation while gaining agent platform capabilities like lifecycle management, observability hooks, and (when persisted) memory/identity.

The flow is intentionally incremental:

- Start with Responses-style code using tools like file search + code interpreter to answer questions grounded on uploaded files.

- Swap only the client initialization block to run on Foundry Agent Service — logic stays the same.

- Use an ephemeral agent reference to test other models (example: switching from GPT-5.1 to Claude Sonnet 4.5) even where "Responses API" tooling isn't natively available for that model — Foundry handles the abstraction.

This is a subtle but important engineering advantage: you can decouple agent runtime + tools from model provider constraints, and test frontier models without rewriting your app.

Ephemeral vs persisted agents: what you gain by persisting

After running ephemeral agents, they persist the agent definition via the Foundry SDK. The key "why persist?" answer is operational:

- The persisted agent shows up in the portal with its model/instructions

- You can interact in the Playground

- And you can publish previews (including to Teams / M365 Copilot in the demo narrative)

Simply put, persisted agents gain identity, observability, and memory.

Think of persistence as moving from "stateless tool-calling" to a governed, inspectable, lifecycle-managed asset.

Observability + evaluation: "break the black box" for agent systems

The Foundry Control Plane is the place to evaluate, monitor, trace, govern, and optimize the agent fleet-wide.

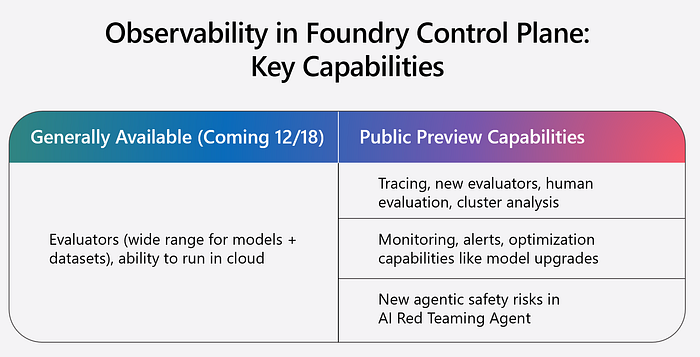

The above image lists key capability buckets:

- Evaluators (GA coming 12/18), runnable locally or in the cloud.

- Tracing (public preview).

- Monitoring/alerts/optimization (including model upgrades).

- AI red teaming agent for "new agentic safety risks".

The evaluator taxonomy is practical:

- Quality (groundedness, relevance, coherence, fluency, similarity, NLP metrics, AOAI graders)

- Risk & Safety (jailbreaks, self-harm, protected material, sensitive data leakage, code vulnerability, etc.)

- Agent-specific (intent resolution, tool selection/input accuracy, task completion, navigation efficiency, etc.)

Microsoft Learn (evaluation SDK / evaluators): https://learn.microsoft.com/en-us/azure/ai-foundry/how-to/evaluate-sdk?view=foundry-classic&WT.mc_id=AZ-MVP-5000671 https://learn.microsoft.com/en-us/azure/ai-foundry/how-to/develop/sdk-overview?view=foundry-classic&WT.mc_id=AZ-MVP-5000671

Scenario 2: Multi-agent workflows (deterministic orchestration with checkpoints)

The second scenario is the "real system" moment: they build a workflow that routes a support issue through multiple agents and tools.

Scenario 2 is a realistic IT support workflow implemented as a deterministic, multi-step agent workflow (not "free-form" agent reasoning).

Here's the flow, end-to-end:

- A user reports an issue (for example: an AKS/Kubernetes problem like ImagePullBackOff).

- The workflow first invokes a "ticketing agent" that creates an Azure DevOps work item via an OpenAPI-connected tool — so the incident is logged immediately.

- The workflow then routes the same context to a specialist troubleshooting agent (the "cloud platform agent"), which is grounded on Kubernetes documentation to produce structured troubleshooting steps.

- If it can't resolve, it follows a predefined escalation path (instead of improvising).

- Crucially, the workflow uses checkpoints: after each step, the system saves state so the conversation can pause/resume reliably with the same thread/run context.

In short: it's a stateful support automation that combines tool-based actions (create ticket) + grounded diagnosis + deterministic routing and recovery, with checkpointed state so it behaves predictably in production.

What stands out technically:

1) Workflows are stateful, and checkpointed

When the user says "hi", the workflow runs, then enters a suspended state — checkpointing the workflow state so the same conversation ID can continue later.

This is the missing piece for production: long-running, multi-step automations need durable state.

2) Tool integration is first-class

The workflow sends the issue first to a "ticketing agent" to create an Azure DevOps work item via OpenAPI integration — before troubleshooting begins.

That's a great pattern: log + trace + accountability before the system starts acting.

3) It routes to a specialized agent grounded on domain docs

Then it dispatches to a "Cloud Platform agent" grounded in Kubernetes docs to troubleshoot "ImagePullBackOff" and escalate if resolution fails.

4) The workflow definition is a YAML-like spec, backed by managed Agent Framework

The doc calls it an internal YAML specification with conditional logic and nodes like "Invoke Azure Agent", and notes they're running "a managed version of Agent Framework" in the service.

Microsoft Learn (workflows concept): https://learn.microsoft.com/en-us/azure/ai-foundry/agents/concepts/workflows?view=foundry-classic&WT.mc_id=AZ-MVP-5000671

Hosted agents: when you need "real code" inside the agent mesh

Hosted Agents are a way to run open-source agent framework solutions "in containers as just another kind of agent," and the workflow YAML can invoke hosted agents as easily as it can prompt agents.

This is the cleanest split I've seen in a modern agent platform:

- Prompt agents for low-code speed

- Hosted agents for full control (framework choice, custom runtimes, specialized dependencies)

Microsoft Learn (hosted agents): https://learn.microsoft.com/en-us/azure/ai-foundry/agents/concepts/hosted-agents?view=foundry-classic&WT.mc_id=AZ-MVP-5000671

Reference architecture: how the pieces fit together

Here's a sample architecture:

Client App / Copilot channel (Teams / M365)

|

v

Foundry Project (Agent definitions + conversation state + files + vector stores + workflow checkpoints)

|

v

Agent Runtime (Agents API built on Responses)

| |-------------------------|

| |

v v

Prompt Agents (fast iteration) Hosted Agents (containers/frameworks)

| |

+--> Tool Catalog (File search, code interpreter, web search,

| Azure AI Search, SharePoint, Fabric, MCP connectors,

| OpenAPI tools, A2A tools)

|

v

Foundry Control Plane (evaluation + tracing + monitoring + governance)Key design intent:

- Move from dev multi-tenant to prod single-tenant by attaching your own Storage/Cosmos/Key Vault/AI Search/VNet without rewriting code

- Operationalize with evaluators + tracing + monitoring, and treat safety as an evaluated property, not a prompt hope

Final Thoughts

Production agents are owned systems. They need clear responsibilities, explicit tool contracts, durable workflows, and continuous evaluation. The Foundry approach — start with Responses-style code, then add persistence, tools, workflow orchestration, evaluators, and tracing — doesn't just make agents easier to build. It makes them easier to operate. And "operate" is where trust is earned.

So when building agents, add checkpoints, instrument traces, run evaluators, and define escalation paths. That's how you turn agentic AI from a clever conversation into something your team can rely on — quietly, repeatedly, and safely.