There are already 30+ alternatives to OpenClaw, and honestly, most of them were easy to ignore.

OpenClaw and its alternatives promise the same thing: agents that can run on a schedule, access local files, send Telegram messages at 6 AM, and keep working without you hovering over a chat box.

But once you actually try them, you will find out that most are heavy, overbuilt runtimes designed for simple conversational demos, not systems you would trust for serious, always-on workload swarms.

That's why OpenFang was the first one that felt worth a closer look.

It is a fully open-source Agent Operating System, written entirely in Rust, shipping as a single 32 MB binary with a 180 ms cold start.

That sounds like benchmark bait but the story is not the language choice or the startup speed.

Because the runtime is so lightweight, OpenFang can keep several autonomous agents running on a single VPS at the same time, 24/7, without feeling bloated.

The setup is almost suspiciously simple:

curl -fsSL https://openfang.sh/install | shRun openfang init

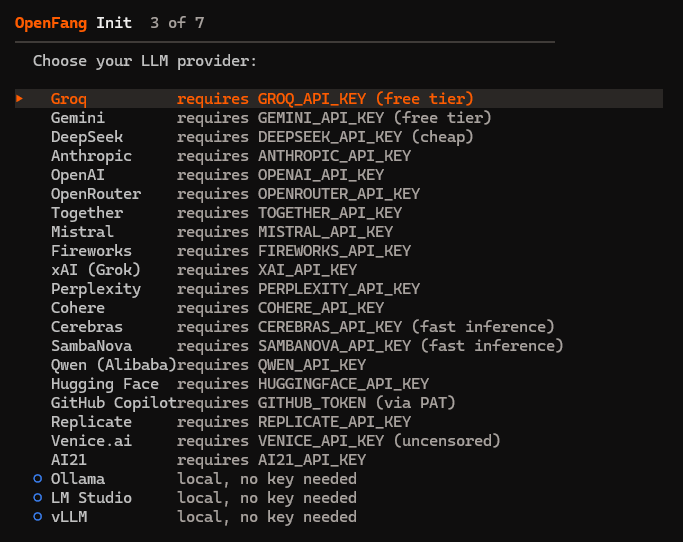

pick a model provider

then open your browser

and you are in.

In this article, I'll break down what OpenFang actually does and provide you a quick start guide.

You will see where it is different from OpenClaw and the growing field of alternatives, and whether it is just another flashy agent framework or one of the few that might genuinely matter for your workloads.

What Agent Frameworks Promise vs. What They Deliver

The team behind OpenFang state it plainly:

"OpenClaw is a good chatbot, but the second you want it to do something real… it just can't. That's the design."

And this is not just an OpenClaw problem.

I ran into this exact frustration myself before I started looking at alternatives.

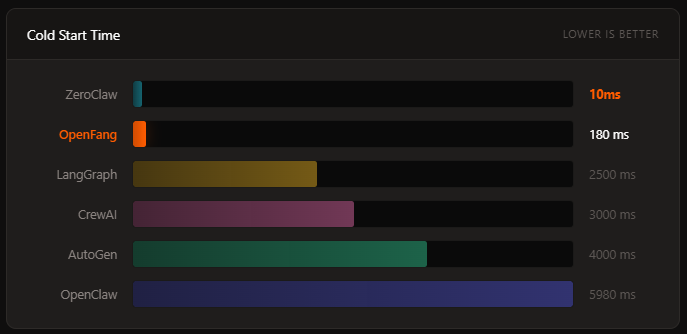

LangGraph needs 2.5 seconds to cold start and 180MB of idle memory. CrewAI sits at 200MB and ~3 seconds. AutoGen is even heavier.

Every one of these frameworks was built with the assumption that a human is sitting there, waiting, prompting.

The timing matters because the industry is moving fast toward autonomous agent workloads, agents that run on cron schedules, agents that wake up and do research, agents that manage social media accounts, agents that generate leads while you sleep.

And the existing toolchain was never built for that. That is why this is directionally important.

Thanks for reading this article. I'm writing a deep-dive ebook on Agentic SaaS, the emerging design patterns that are quietly powering the most innovative startups of 2026.

You can read it here: Agentic SaaS Patterns Winning in 2026, packed with real-world examples, architectures, and workflows you won't find anywhere else.

What Exactly Is OpenFang?

OpenFang is an open-source Agent Operating System built from scratch in Rust. It is MIT-licensed, currently at v0.3.34.

What is important to understand here is that this is not a Python wrapper around an LLM or a "multi-agent orchestrator."

It is a full operating system that compiles to a single binary.

Here is what that looks like in practice:

curl -fsSL https://openfang.sh/install | sh

openfang init

openfang start

# Dashboard live at http://localhost:4200Four commands.

Your agent infrastructure is running.

The entire install is ~32MB.

Compare that to OpenClaw at ~500MB.

The Core Concept: "Hands"

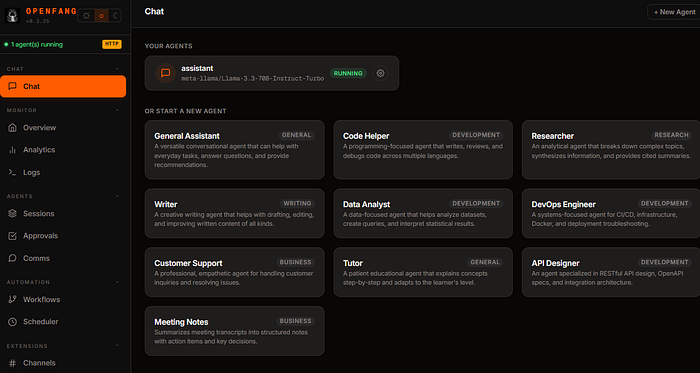

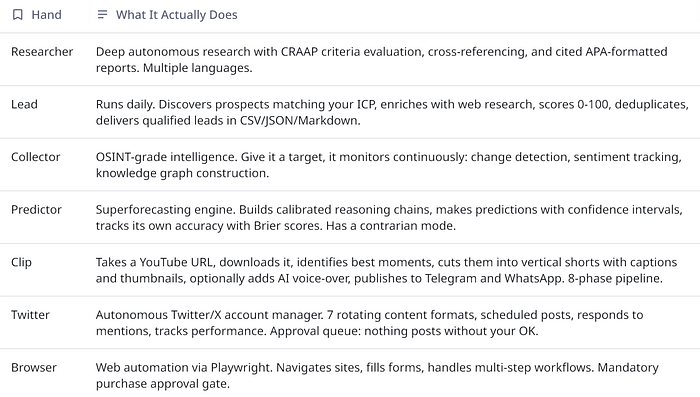

OpenFang ships with 7 pre-built autonomous agents called Hands, where each Hand is a full capability package that runs independently:

Each Hand bundles a HAND.toml manifest, a 500+ word multi-phase system prompt, a SKILL.md domain expertise reference, and guardrails for sensitive actions.

# Activate the Researcher Hand — it starts working immediately

openfang hand activate researcher

# Check its progress anytime

openfang hand status researcher

# Activate lead generation on a daily schedule

openfang hand activate lead

# Pause without losing state

openfang hand pause leadI think this is great given that every other framework I have used treats agent as "thing that responds to me." OpenFang treats it as "thing that works for me."

The Benchmarks Only Tell One Story

The numbers are from the OpenFang repo, sourced from official documentation and public repositories:

The cold start difference is significant between OpenFang and OpenClaw.

The memory gap is nearly 10x.

If you are running agents on a VPS (which many of us are), this is the difference between running 10 agents on a cheap box versus needing to upgrade your infra.

For context: ZeroClaw (another Rust-based system) beats OpenFang on raw startup time (10ms) and memory (5MB), but it ships with only 12 built-in tools and 15 channel adapters compared to OpenFang's 53 tools and 40 adapters.

Whether that tradeoff works for you depends entirely on how much out-of-the-box functionality you need versus raw performance.

I also previously covered other OpenClaw variants here:

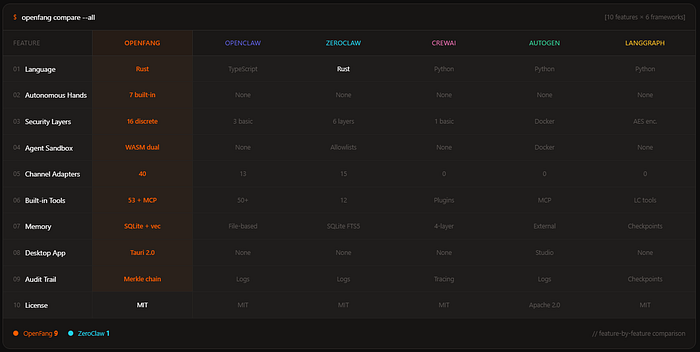

The Feature Matrix

Here's the actual feature-by-feature comparison that tells you whether something is production-ready:

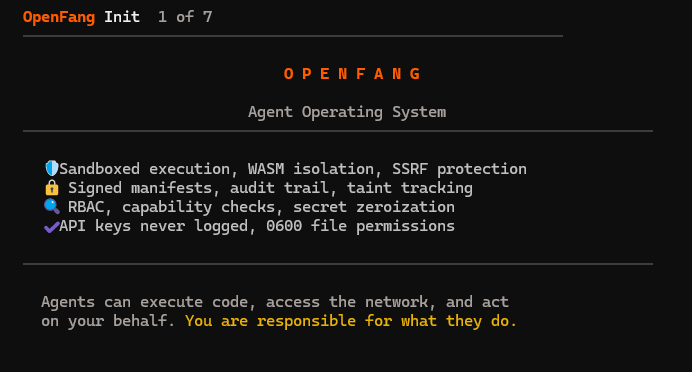

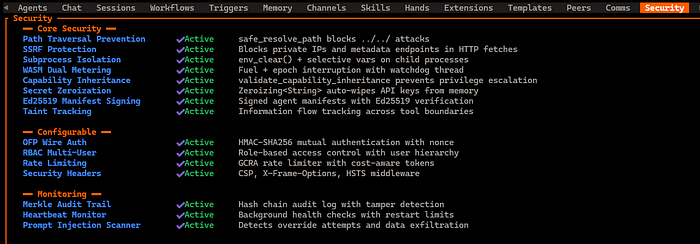

The thing that matters most for engineers reading this is the security story.

16 discrete security layers is wild for a pre-1.0 project.

And WASM dual-metered sandboxing, Merkle hash-chain audit trails, Ed25519 signed agent manifests, taint tracking, SSRF protection, secret zeroization, prompt injection scanning which reads like something you would expect from an enterprise security team, not an open-source project.

How Is This Actually Helpful?

I have found myself being much more willing to experiment with autonomous agent patterns when the infrastructure is this lightweight.

Here is what that looks like in practice.

Scenario 1: The "Set It and Forget It" Researcher

You have a topic you need to monitor, e.g. competitor product launches.

You activate the Researcher Hand, point it at your target, and it runs on a schedule.

It wakes up, researches, cross-references sources, evaluates credibility, and delivers a cited report.

You check the dashboard when you want.

Scenario 2: Lead Generation That Actually Runs

The Lead Hand runs daily.

It discovers prospects matching your ideal customer profile, enriches them with web data, scores them, deduplicates against your existing contacts, and delivers a clean CSV.

I keep coming back to this example because it is the kind of task that agent frameworks promise but never actually deliver because they require you to babysit the process.

Scenario 3: Multi-Channel Agent Deployment

This is very helpful especially when I am working on projects where the agent needs to exist across multiple platforms.

OpenFang ships with 40 channel adapters:

- Core: Telegram, Discord, Slack, WhatsApp, Signal, Matrix, Email (IMAP/SMTP)

- Enterprise: Microsoft Teams, Mattermost, Google Chat, Webex, Feishu/Lark, Zulip

- Social: LINE, Viber, Facebook Messenger, Mastodon, Bluesky, Reddit, LinkedIn, Twitch

- Community: IRC, XMPP, Guilded, Revolt, Keybase, Discourse, Gitter

- Privacy: Threema, Nostr, Mumble, Nextcloud Talk, Rocket.Chat, Ntfy, Gotify

- Workplace: Pumble, Flock, Twist, DingTalk, Zalo, Webhooks

Each adapter supports per-channel model overrides, DM/group policies, rate limiting, and output formatting.

If you have been building agents and then realizing you need to manually integrate with 5 different messaging platforms, you are not doing anything wrong, the other frameworks just do not solve that problem.

Scenario 4: The Personal AI Stack

One pattern that is emerging in the community is pairing OpenFang with tools like Paperclip and Obsidian as a super-stack, which is using OpenFang's autonomous Hands to execute tasks while Obsidian handles your knowledge base and Paperclip glues them together.

The promise is that your own army of agents executing the mundane, creating time and money for the things that matter.

Migration From Existing Frameworks

If you already have an OpenClaw setup, migration is a single command:

# Migrate everything — agents, memory, skills, configs

openfang migrate --from openclaw

# Dry run first to see what would change

openfang migrate --from openclaw --dry-runThe migration engine imports your agents, conversation history, skills, and configuration.

OpenFang reads SKILL.md natively and is compatible with the ClawHub marketplace.

Getting Started with OpenFang

If you want to try it yourself, here is the path of least resistance:

Install

# macOS / Linux

curl -sSf https://openfang.sh | sh

# Windows (PowerShell)

irm https://openfang.sh/install.ps1 | iex

# Or via Cargo (requires Rust 1.75+)

cargo install --git https://github.com/RightNow-AI/openfang openfang-cli

# Or Docker

docker pull ghcr.io/RightNow-AI/openfang:latestInitialize and Configure

openfang initThis creates ~/.openfang/ with a default config. Set your API key:

# Pick one (or more)

export ANTHROPIC_API_KEY=sk-ant-...

export OPENAI_API_KEY=sk-...

export GROQ_API_KEY=gsk_... # Free tier availableEdit ~/.openfang/config.toml to configure your default model:

[default_model]

provider = "groq"

model = "llama-3.3-70b-versatile"

api_key_env = "GROQ_API_KEY"

[memory]

decay_rate = 0.05

[network]

listen_addr = "127.0.0.1:4200"Verify and Launch

openfang doctor # Checks config, API keys, toolchain

openfang start # Dashboard live at http://127.0.0.1:4200Spawn Your First Agent

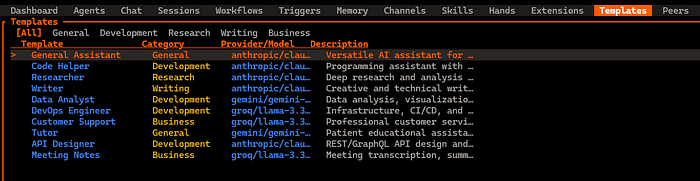

# Use a built-in template (30 available)

openfang agent spawn agents/hello-world/agent.toml

# Or activate a Hand

openfang hand activate researcher

# Chat with it

openfang chat researcher

Quick Command Reference

openfang hand activate <name> # Activate an autonomous Hand

openfang hand status <name> # Check Hand progress

openfang hand pause <name> # Pause without losing state

openfang agent spawn <manifest.toml> # Spawn a custom agent

openfang agent list # List all agents

openfang agent chat <id> # Chat with an agent

openfang migrate --from openclaw # Migrate from OpenClaw

openfang skill list # List 60 bundled skills

openfang channel setup <channel> # Set up a messaging channelConcluding Thoughts

The architectural decision to build agents-first, chat-second is correct for where the industry is heading, and 180ms cold start and 40MB idle memory means you can run many agents on modest hardware

I also think that Hands concept, bundled autonomous capability packages with guardrails, is the right abstraction

However, the developer experience and ecosystem polish lag behind OpenClaw significantly, e.g. not all latest-generation models are supported yet (the gpt-5.3-codex)

Community and third-party ecosystem are also nascent, and I found some Hands are more mature than others (Browser and Researcher are the most battle-tested)

If you have deployed OpenFang Hands in a real production workflow (lead gen, content ops, monitoring), please let me know in the comments.

If you have been building with any of these frameworks and have opinions, especially if you have production hours, not just demo hours, I would genuinely love to hear what you have found!